Building a recommender system

Building a recommender system

Agenda:

- What is a recommender system?

- Defining a metrics system [3 possible approaches]

- The popularity model

- Content based filtering

- Collaborative filtering

The problem

Some examples of where you might find / use a recommendation engine?

Global examples

* Predicting next cities to visit ( booking.com )

* Predicting next meetups to attend ( meetup.com )

* Predicting people to befriend ( facebook.com )

* Predicting what adds to show you ( google.com )

etc..

Local examples

* Predicting future products to buy ( emag ;) )

* Predict next meals to have ( hipmenu ;) )

* Predicting next teams to follow ( betfair ;) )

etc..

Our data and use case

Let’s just say that I know many people from Amazon :D

We will be using a book dataset found here. It contains 10k books and 6 mil reviews (scores 1, 5).

Our task is to take those ratings and suggest for each user new things to read based on his previous feedback.

Formally

Given * a set of users [U1, U2, …] * a set of possible elements [E1, E2, … ] * some prior interactions (relation) between Us and Es ( {seen, clicked, subscribed, bought} or a rating, or a feedback, etc..),

for a given user U, predict a list of top N elements from E such as U maximizes the defined relation.

As I’ve said, usually the relation is an something numeric, business defined ( amount of money, click-through-rates, churn, etc..)

Loading the data

import pandas as pd

books = pd.read_csv("./Building a recommender system/books.csv")

ratings = pd.read_csv("./Building a recommender system/ratings.csv")

tags = pd.read_csv("./Building a recommender system/tags.csv")

tags = tags.set_index('tag_id')

book_tags = pd.read_csv("./Building a recommender system/book_tags.csv")

Data exploration

This wrapped function tells the pandas library to display all the fields

def display_all(df):

with pd.option_context("display.max_rows", 1000, "display.max_columns", 1000):

display(df)

Books are sorted by their popularity, as measured by number of ratings

display_all(books.head().T)

| 0 | 1 | 2 | 3 | 4 | |

|---|---|---|---|---|---|

| book_id | 1 | 2 | 3 | 4 | 5 |

| goodreads_book_id | 2767052 | 3 | 41865 | 2657 | 4671 |

| best_book_id | 2767052 | 3 | 41865 | 2657 | 4671 |

| work_id | 2792775 | 4640799 | 3212258 | 3275794 | 245494 |

| books_count | 272 | 491 | 226 | 487 | 1356 |

| isbn | 439023483 | 439554934 | 316015849 | 61120081 | 743273567 |

| isbn13 | 9.78044e+12 | 9.78044e+12 | 9.78032e+12 | 9.78006e+12 | 9.78074e+12 |

| authors | Suzanne Collins | J.K. Rowling, Mary GrandPré | Stephenie Meyer | Harper Lee | F. Scott Fitzgerald |

| original_publication_year | 2008 | 1997 | 2005 | 1960 | 1925 |

| original_title | The Hunger Games | Harry Potter and the Philosopher's Stone | Twilight | To Kill a Mockingbird | The Great Gatsby |

| title | The Hunger Games (The Hunger Games, #1) | Harry Potter and the Sorcerer's Stone (Harry P... | Twilight (Twilight, #1) | To Kill a Mockingbird | The Great Gatsby |

| language_code | eng | eng | en-US | eng | eng |

| average_rating | 4.34 | 4.44 | 3.57 | 4.25 | 3.89 |

| ratings_count | 4780653 | 4602479 | 3866839 | 3198671 | 2683664 |

| work_ratings_count | 4942365 | 4800065 | 3916824 | 3340896 | 2773745 |

| work_text_reviews_count | 155254 | 75867 | 95009 | 72586 | 51992 |

| ratings_1 | 66715 | 75504 | 456191 | 60427 | 86236 |

| ratings_2 | 127936 | 101676 | 436802 | 117415 | 197621 |

| ratings_3 | 560092 | 455024 | 793319 | 446835 | 606158 |

| ratings_4 | 1481305 | 1156318 | 875073 | 1001952 | 936012 |

| ratings_5 | 2706317 | 3011543 | 1355439 | 1714267 | 947718 |

| image_url | https://images.gr-assets.com/books/1447303603m... | https://images.gr-assets.com/books/1474154022m... | https://images.gr-assets.com/books/1361039443m... | https://images.gr-assets.com/books/1361975680m... | https://images.gr-assets.com/books/1490528560m... |

| small_image_url | https://images.gr-assets.com/books/1447303603s... | https://images.gr-assets.com/books/1474154022s... | https://images.gr-assets.com/books/1361039443s... | https://images.gr-assets.com/books/1361975680s... | https://images.gr-assets.com/books/1490528560s... |

We have 10k books

len(books)

10000

Ratings are sorted chronologically, oldest first.

display_all(ratings.head())

| user_id | book_id | rating | |

|---|---|---|---|

| 0 | 1 | 258 | 5 |

| 1 | 2 | 4081 | 4 |

| 2 | 2 | 260 | 5 |

| 3 | 2 | 9296 | 5 |

| 4 | 2 | 2318 | 3 |

ratings.rating.min(), ratings.rating.max()

(1, 5)

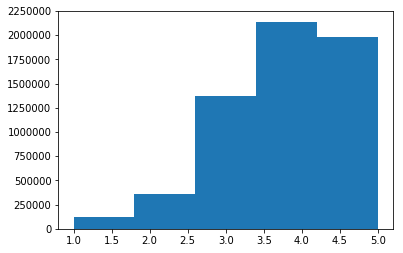

ratings.rating.hist( bins = 5, grid=False)

It appears that 4 is the most popular rating. There are relatively few ones and twos.

len(ratings)

5976479

Most books have a few hundred reviews, but some have as few as eight.

reviews_per_book = ratings.groupby( 'book_id' ).book_id.apply( lambda x: len( x ))

reviews_per_book.to_frame().describe()

| book_id | |

|---|---|

| count | 10000.000000 |

| mean | 597.647900 |

| std | 1267.289788 |

| min | 8.000000 |

| 25% | 155.000000 |

| 50% | 248.000000 |

| 75% | 503.000000 |

| max | 22806.000000 |

Train test split

Of course we need to first follow the best practices and split the data into training and testing sets.

from sklearn.model_selection import train_test_split

train_df, test_df = train_test_split(ratings,

stratify=ratings['user_id'],

test_size=0.20,

)

len(train_df), len(test_df)

(4781183, 1195296)

Evaluation metrics

Let’s think a bit about how would we measure a recommendation engine..

Any ideas?

If you say either of {precision, recall, f-score, accuracy} you’re wrong

Top-N accuracy metrics

… are a class of metrics that are called Top-N accuracy metrics, which evaluate the accuracy of the top recommendations provided to a user, comparing to the items the user has actually interacted in test set.

- Recall@N

- given a list [n, n, n, p, n, n, …]

- N the top most important results, how often does p is among the returned N values

- Has variants Recall@5, Recall@10, etc..

- NDCG@N (Normalized Discounted Cumulative Gain @ N)

- A recommender returns some items and we’d like to compute how good the list is. Each item has a relevance score, usually a non-negative number. That’s gain.

- Now we add up those scores; that’s cumulative gain.

- We’d prefer to see the most relevant items at the top of the list, therefore before summing the scores we divide each by a growing number (usually a logarithm of the item position) - that’s discounting

- DCGs are not directly comparable between users, so we normalize them.

- MAP@N (Mean Average Precision)

Popularity model

A common baseline approach is the Popularity model.

This model is not actually personalized - it simply recommends to a user the most popular items that the user has not previously consumed.

As the popularity accounts for the “wisdom of the crowds”, it usually provides good recommendations, generally interesting for most people.

book_ratings = ratings.groupby('book_id').size().reset_index(name='users')

book_popularity = ratings.groupby('book_id')['rating'].sum().sort_values(ascending=False).reset_index()

book_popularity = pd.merge(book_popularity, book_ratings, how='inner', on=['book_id'])

book_popularity = pd.merge(book_popularity, books[['book_id', 'title', 'authors']], how='inner', on=['book_id'])

book_popularity = book_popularity.sort_values(by=['rating'], ascending=False)

book_popularity.head()

| book_id | rating | users | title | authors | |

|---|---|---|---|---|---|

| 0 | 1 | 97603 | 22806 | The Hunger Games (The Hunger Games, #1) | Suzanne Collins |

| 1 | 2 | 95077 | 21850 | Harry Potter and the Sorcerer's Stone (Harry P... | J.K. Rowling, Mary GrandPré |

| 2 | 4 | 82639 | 19088 | To Kill a Mockingbird | Harper Lee |

| 3 | 18 | 70059 | 15855 | Harry Potter and the Prisoner of Azkaban (Harr... | J.K. Rowling, Mary GrandPré, Rufus Beck |

| 4 | 25 | 69265 | 15304 | Harry Potter and the Deathly Hallows (Harry Po... | J.K. Rowling, Mary GrandPré |

Unfortunately, this strategy depends on what we rank on….

Let’s imagine we have another way to rank this list. (Above, books are sorted by their popularity, as measured by number of ratings.)

Let’s imagine we want to sort books by their average rating ( i.e. sum(ratings) / len(ratings))

book_popularity.rating = book_popularity.rating / book_popularity.users

book_popularity = book_popularity.sort_values(by=['rating'], ascending=False)

book_popularity.head(n=20)

| book_id | rating | users | title | authors | |

|---|---|---|---|---|---|

| 2108 | 3628 | 4.829876 | 482 | The Complete Calvin and Hobbes | Bill Watterson |

| 9206 | 7947 | 4.818182 | 88 | ESV Study Bible | Anonymous, Lane T. Dennis, Wayne A. Grudem |

| 6648 | 9566 | 4.768707 | 147 | Attack of the Deranged Mutant Killer Monster S... | Bill Watterson |

| 4766 | 6920 | 4.766355 | 214 | The Indispensable Calvin and Hobbes | Bill Watterson |

| 5702 | 8978 | 4.761364 | 176 | The Revenge of the Baby-Sat | Bill Watterson |

| 3904 | 6361 | 4.760456 | 263 | There's Treasure Everywhere: A Calvin and Hobb... | Bill Watterson |

| 4228 | 6590 | 4.757202 | 243 | The Authoritative Calvin and Hobbes: A Calvin ... | Bill Watterson |

| 2695 | 4483 | 4.747396 | 384 | It's a Magical World: A Calvin and Hobbes Coll... | Bill Watterson |

| 3627 | 3275 | 4.736842 | 285 | Harry Potter Boxed Set, Books 1-5 (Harry Potte... | J.K. Rowling, Mary GrandPré |

| 1579 | 1788 | 4.728528 | 652 | The Calvin and Hobbes Tenth Anniversary Book | Bill Watterson |

| 4047 | 5207 | 4.722656 | 256 | The Days Are Just Packed: A Calvin and Hobbes ... | Bill Watterson |

| 9659 | 8946 | 4.720000 | 75 | The Divan | Hafez |

| 1067 | 1308 | 4.718114 | 933 | A Court of Mist and Fury (A Court of Thorns an... | Sarah J. Maas |

| 5938 | 9141 | 4.711765 | 170 | The Way of Kings, Part 1 (The Stormlight Archi... | Brandon Sanderson |

| 681 | 862 | 4.702840 | 1373 | Words of Radiance (The Stormlight Archive, #2) | Brandon Sanderson |

| 4445 | 3753 | 4.699571 | 233 | Harry Potter Collection (Harry Potter, #1-6) | J.K. Rowling |

| 3900 | 5580 | 4.689139 | 267 | The Calvin and Hobbes Lazy Sunday Book | Bill Watterson |

| 6968 | 8663 | 4.680851 | 141 | Locke & Key, Vol. 6: Alpha & Omega | Joe Hill, Gabriel Rodríguez |

| 5603 | 8109 | 4.677596 | 183 | The Absolute Sandman, Volume One | Neil Gaiman, Mike Dringenberg, Chris Bachalo, ... |

| 7743 | 8569 | 4.669355 | 124 | Styxx (Dark-Hunter, #22) | Sherrilyn Kenyon |

?! Maybe this Bill Watterson pays people for good reviews..

This of course is insufficient advice for good books. Title fanatics may give high scores for unknown books. Since they are the only ones that do reviews, the books generally get good scores.

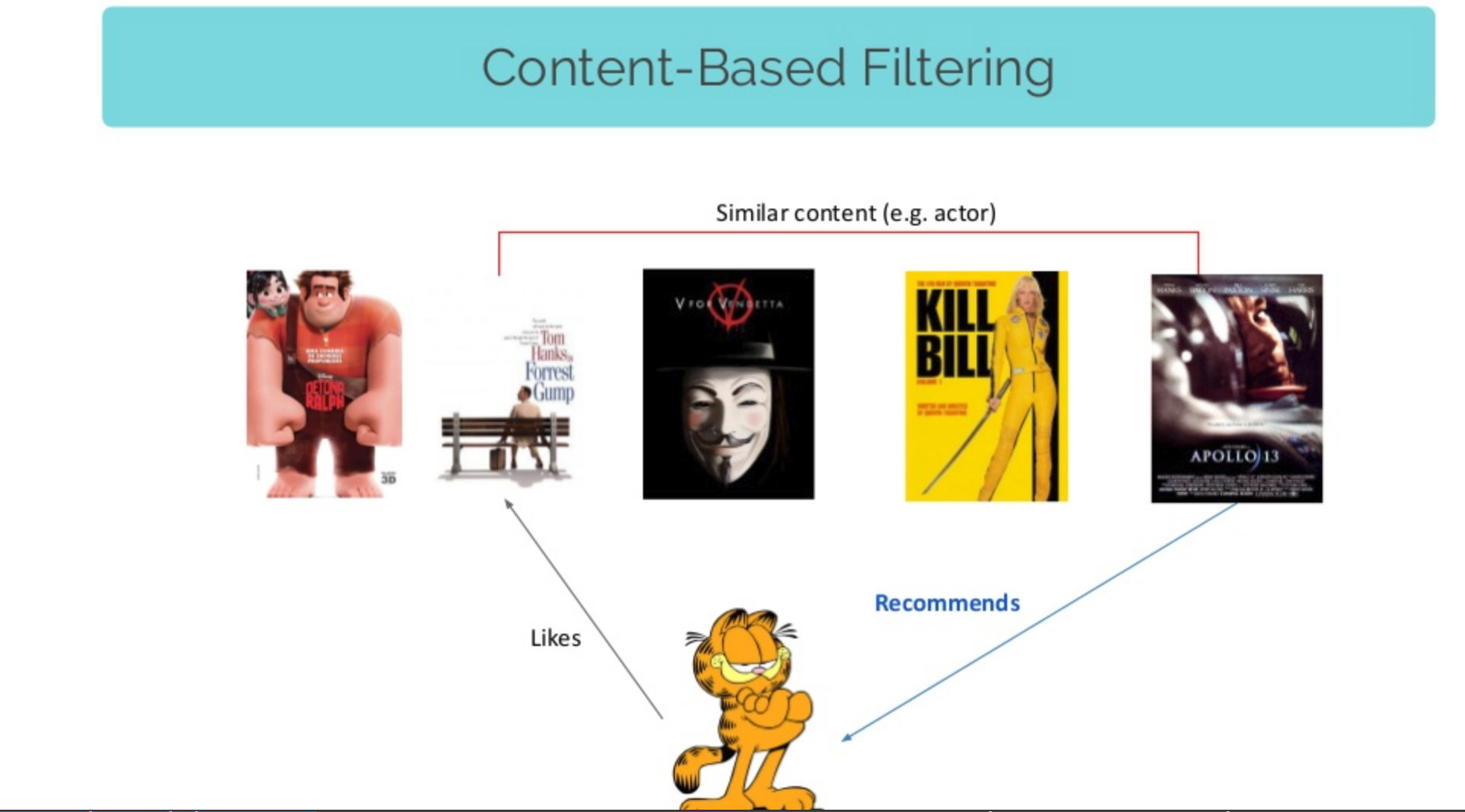

Content based filtering

The aim of this approach is to group similar object together and recommend new objects from the same categories that the user already purchased

We already have tags for books in this dataset, let’s use them!

book_tags.head()

| goodreads_book_id | tag_id | count | |

|---|---|---|---|

| 0 | 1 | 30574 | 167697 |

| 1 | 1 | 11305 | 37174 |

| 2 | 1 | 11557 | 34173 |

| 3 | 1 | 8717 | 12986 |

| 4 | 1 | 33114 | 12716 |

def get_tag_name(tag_id):

return {word for word in tags.loc[tag_id].tag_name.split('-') if word}

get_tag_name(20000)

{'in', 'midnight', 'paris'}

We’re going to accumulate all the tags of a book in a single datastructure

from tqdm import tqdm_notebook as tqdm

book_tags_dict = dict()

for book_id, tag_id, _ in tqdm(book_tags.values):

tags_of_book = book_tags_dict.setdefault(book_id, set())

tags_of_book |= get_tag_name(tag_id)

Let’s see the tags for one book

" ".join(book_tags_dict[105])

'up read literature reading ficción place i time speculative audible favorites ebooks adult 20th ciencia book chronicles owned sciencefiction audio general kindle opera gave sci bought fiction currently imaginary stories not future books herbert short fantascienza favourites sf space scifi paperback it bookshelf religion get buy re default fantasy 1980s series ficcion audiobook frank novels century fi my adventure philosophy classic home to dune and calibre in e novel on science classics american ebook shelfari unread politics f finished epic scanned s all audiobooks english own library sff'

And the book is…

books.loc[books.goodreads_book_id == 105][['book_id', 'title', 'authors']]

| book_id | title | authors | |

|---|---|---|---|

| 2816 | 2817 | Chapterhouse: Dune (Dune Chronicles #6) | Frank Herbert |

There are two types of ids in this dataset: goodreads_book_id and book_id we will make two dicts to switch from one to the other

goodread2id = {goodreads_book_id: book_id for book_id, goodreads_book_id in books[['book_id', 'goodreads_book_id']].values}

id2goodread = dict(zip(goodread2id.values(), goodread2id.keys()))

id2goodread[2817], goodread2id[105]

(105, 2817)

Then we’re going to do convert the tags into a numpy plain array that we aim to process later. The row position of a tag should match the book_id. Because these start from 1, we will add a DUMMY padding element.

import numpy as np

np_tags = np.array(sorted([[0, "DUMMY"]] + [[goodread2id[id], " ".join(tags)] for id, tags in book_tags_dict.items()]))

np_tags[:5]

array([['0', 'DUMMY'],

['1',

'read age reading i time speculative 2014 the favorites loved adult ebooks than of book owned 5 audio thriller kindle suzanne club faves favourite teen sci love currently fiction dystopian stars drama suspense action reviewed dystopia future 2011 young books ya futuristic favourites sf post 2013 borrowed trilogy scifi it games once buy distopian re default fantasy series distopia triangle audiobook novels 2010 fi my adventure romance favs contemporary lit to reads 2012 in e novel dystopias science favorite ebook shelfari finished star collins reread hunger more survival all audiobooks english apocalyptic completed coming own library'],

['2',

'read 2016 literature reading own 2015 i time 2014 favorites 2017 jk adult than childhood owned 5 audio england kindle faves favourite teen sci kids j currently fiction mystery friendship stars youth childrens young books ya k potter favourites 2013 urban scifi it bookshelf once buy re default fantasy series audiobook novels fi my grade adventure wizards shelf favs contemporary witches classic to reads children harry in rereads on novel science classics rowling favorite juvenile british ebook shelfari magic middle reread more s supernatural paranormal all audiobooks english lit library'],

['3',

'read stephenie reading finish i time high favorites 2008 adult than meh book owned 5 werewolves meyer kindle pleasures club faves teen sci guilty love fiction currently not stars drama stephanie young books ya already favourites urban scifi it bookshelf horror once re default fantasy saga series triangle audiobook novels youngadult fi my movie vampires séries romance shelf contemporary lit to vampire chick again did in on twilight movies science favorite pleasure american adults 2009 ebook dnf shelfari vamps never finished school abandoned more reread supernatural pnr first paranormal all audiobooks english romantic completed have own library'],

['4',

'crime read 2016 age lee literature reading own 2015 i time required historical 1001 the 2014 high history favorites prize family adult 20th racism you of book bookclub childhood before list owned 5 audio general kindle wish club banned faves usa favourite fiction currently mystery challenge stars drama young books ya favourites modern it buy re default race for audiobook die novels century harper my contemporary classic to reads pulitzer clàssics southern again in novel gilmore classics american favorite literary ebook shelfari realistic school rory reread must all audiobooks english coming lit library']],

dtype='<U970')

Next up we want to process the tags but if you look closely there are many words that have the same meaning but are slighly different (because of the context in which they are used).

We’d like to normalize them as much as possible so as to keep the overall vocabulary small.

This process can be accomplished through stemming and lemmatization. Let me show you an example:

stemmer = PorterStemmer()

stemmer.stem('autobiographical')

'autobiograph'

Lemmatisation is the process of grouping together the inflected forms of a word so they can be analysed as a single item, identified by the word’s lemma, or dictionary form.

Stamming, is the process of reducing inflected words to their word stem, base or root form—generally a written word form.

from nltk import word_tokenize

from nltk.stem import WordNetLemmatizer, PorterStemmer

class LemmaTokenizer(object):

def __init__(self):

self.wnl = WordNetLemmatizer()

self.stm = PorterStemmer()

def __call__(self, doc):

return [self.stm.stem(self.wnl.lemmatize(t)) for t in word_tokenize(doc)]

After this we’re ready to process the data.

We will build a sklearn pipeline to process the tags.

We will first be using a tf-idf metric customized to tokenize words with the above implemented Lemmer and Stemmer class. Then we will use a StandardScaler transform to make all the values in the resulting matrix [0, 1] bound.

from sklearn.pipeline import Pipeline

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.preprocessing import StandardScaler

p = Pipeline([

('vectorizer', TfidfVectorizer(

tokenizer=LemmaTokenizer(),

strip_accents='unicode',

ngram_range=(1, 1),

max_features=1000,

min_df=0.005,

max_df=0.5,

)),

('normalizer', StandardScaler(with_mean=False))

])

trans = p.fit_transform(np_tags[:,1])

trans.shape

(10001, 1000)

After this point, the trans variable contains a row for each book, each suck row

corresponds to a 1000 dimensional array. Each element of that 1000, is a score for the most important 1000 words that the TfidfVectorizer decided to keep (1000 words chosen from all the book tags provided).

This is the vectorized representation of the book, the vector the contains (many 0 values) the most important (cumulated for all users) words with which people tagged the books.

Next up, let’s see how many users do we have and let’s extract them into a single list.

users = ratings.set_index('user_id').index.unique().values

len(users)

53424

That’s how we get all the book ratings of a single user

ratings.loc[ratings.user_id == users[0]][['book_id', 'rating']].head()

| book_id | rating | |

|---|---|---|

| 0 | 258 | 5 |

| 75 | 268 | 3 |

| 76 | 5556 | 3 |

| 77 | 3638 | 3 |

| 78 | 1796 | 5 |

We’ll actually write a function for this because it’s rather obscure. We want all the book_ids that the user rated, along with the given rating for each book.

def books_and_ratings(user_id):

books_and_ratings_df = ratings.loc[ratings.user_id == user_id][['book_id', 'rating']]

u_books, u_ratings = zip(*books_and_ratings_df.values)

return np.array(u_books), np.array(u_ratings)

u_books, u_ratings = books_and_ratings(users[0])

u_books.shape, trans[u_books].shape

((117,), (117, 1000))

We then multiply the book’s ratings with the features of the book, to boost the features importance for this user, then add everything together into a single user specific feature vector.

user_vector = (u_ratings * trans[u_books]) / len(u_ratings)

user_vector.shape

(1000,)

If we get all the features of a book, scale each book by the user’s ratings and then do a mean on all the scaled book features as above, we actually obtain a condensed form of that user’s preferences.

So doing the above we just obtained a user_vector, a 1000 dimensional vector that expresses what the user likes, by combining his prior ratings on the books he read with the respected book_vectors.

def get_user_vector(user_id):

u_books, u_ratings = books_and_ratings(user_id)

u_books_features = trans[u_books]

u_vector = (u_ratings * u_books_features) / len(u_ratings)

return u_vector

def get_user_vectors():

user_vectors = np.zeros((len(users), 1000))

for user_id in tqdm(users[:1000]):

u_vector = get_user_vector(user_id)

user_vectors[user_id, :] = u_vector

return user_vectors

user_vectors = get_user_vectors()

HBox(children=(IntProgress(value=0, max=1000), HTML(value='')))

The pipeline transformation also keeps the most important 1000 words. Let’s see a sample of them now..

trans_feature_names = p.named_steps['vectorizer'].get_feature_names()

np.random.permutation(np.array(trans_feature_names))[:100]

array(['guilti', 'america', 'roman', 'witch', '1970', 'scott', 'hous',

'occult', 'michael', 'winner', 'nonfic', 'improv', 'australia',

'pre', 'britain', 'london', 'tear', 'comicbook', 'steami', 'arab',

'young', 'alex', 'literatura', 'man', 'altern', 's', 'sport',

'warfar', 'california', 'americana', 'keeper', '311', 'era',

'life', 'heroin', 'urban', 'sexi', '18th', 'were', 'black',

'super', 'goodread', 'nativ', 'روايات', 'novella', 'great',

'youth', 'pleasur', 'mayb', 'canadiana', 'childhood', 'realli',

'be', 'home', 'pictur', 'for', 'all', 'guardian', 'race',

'investig', 'earli', 'easi', 'latin', '314', 'long', 'seen', '20',

'polit', 'group', 'fave', 'abus', 'lendabl', 'clasico', 'essay',

'punk', 'town', 'biblic', 'mental', 'oprah', 'fantasia', 'tween',

'dean', 'asia', 'jane', 'epub', 'hilari', 'as', 'singl', '33',

'new', 'ministri', 'psycholog', 'sweet', 'jennif', 'orphan', '8th',

'idea', 'neurosci', 'natur', 'boyfriend'], dtype='<U16')

Once we have the user_vectors computed, we want to show the most important 20 words for it.

user_id = 801

pd.DataFrame(

sorted(

zip(

trans_feature_names,

user_vectors[user_id].flatten().tolist()

),

key=lambda x: -x[1]

)[:20],

columns=['token', 'relevance']

).T

| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| token | club | group | bookclub | literatur | abandon | literari | gener | t | centuri | didn | memoir | drama | shelfari | histori | biographi | recommend | non | did | borrow | not |

| relevance | 6.32432 | 6.20145 | 5.94722 | 5.46231 | 5.01933 | 4.88025 | 4.53921 | 4.39075 | 4.36124 | 4.25427 | 4.21645 | 4.1113 | 4.10304 | 4.07408 | 4.07243 | 3.99759 | 3.99382 | 3.89373 | 3.82424 | 3.80188 |

The last piece of the puzzle at this stage is making a link between the book_vectors and the user_vectors.

Since both of them are computed on the same feature space, we simply need a distance metric that would rank a given user_vector to all the book_vectors.

One such metric is the cosine_similarity that we’re using bellow. It computes a distance between two n-dimensional vectors and returns a score between -1 and 1 where:

- something close to -1 means that the vectors have opposite relations (e.g. comedy and drama)

- something close to 0 means that the vectors have no relation between them

- something close to 1 means that the vectors are quite similar

The code bellow will compute a recommendation for a single user.

from sklearn.metrics.pairwise import cosine_similarity

user_id = 100

cosine_similarities = cosine_similarity(np.expand_dims(user_vectors[user_id], 0), trans)

similar_indices = cosine_similarities.argsort().flatten()[-20:]

similar_indices

array([ 194, 172, 7810, 3085, 213, 180, 35, 872, 3508, 5374, 8456,

397, 3913, 1015, 8233, 162, 629, 2926, 4531, 323])

Which translates to the following books:

books.loc[books.book_id.isin(similar_indices)][['title', 'authors']]

| title | authors | |

|---|---|---|

| 34 | The Alchemist | Paulo Coelho, Alan R. Clarke |

| 161 | The Stranger | Albert Camus, Matthew Ward |

| 171 | Anna Karenina | Leo Tolstoy, Louise Maude, Leo Tolstoj, Aylmer... |

| 179 | Siddhartha | Hermann Hesse, Hilda Rosner |

| 193 | Moby-Dick or, The Whale | Herman Melville, Andrew Delbanco, Tom Quirk |

| 212 | The Metamorphosis | Franz Kafka, Stanley Corngold |

| 322 | The Unbearable Lightness of Being | Milan Kundera, Michael Henry Heim |

| 396 | Perfume: The Story of a Murderer | Patrick Süskind, John E. Woods |

| 628 | Veronika Decides to Die | Paulo Coelho, Margaret Jull Costa |

| 871 | The Plague | Albert Camus, Stuart Gilbert |

| 1014 | Steppenwolf | Hermann Hesse, Basil Creighton |

| 2925 | The Book of Laughter and Forgetting | Milan Kundera, Aaron Asher |

| 3084 | Narcissus and Goldmund | Hermann Hesse, Ursule Molinaro |

| 3507 | Swann's Way (In Search of Lost Time, #1) | Marcel Proust, Simon Vance, Lydia Davis |

| 3912 | Immortality | Milan Kundera |

| 4530 | The Joke | Milan Kundera |

| 5373 | Laughable Loves | Milan Kundera, Suzanne Rappaport |

| 7809 | Slowness | Milan Kundera, Linda Asher |

| 8232 | The Book of Disquiet | Fernando Pessoa, Richard Zenith |

| 8455 | Life is Elsewhere | Milan Kundera, Aaron Asher |

This approach gives way better predictions than the popularity model but, on the other hand, this relies on us having the content of the books (tags, book_tags, etc..) which we might not have, or might add a new level of complexity (parsing, cleaning, summarizing, etc…).

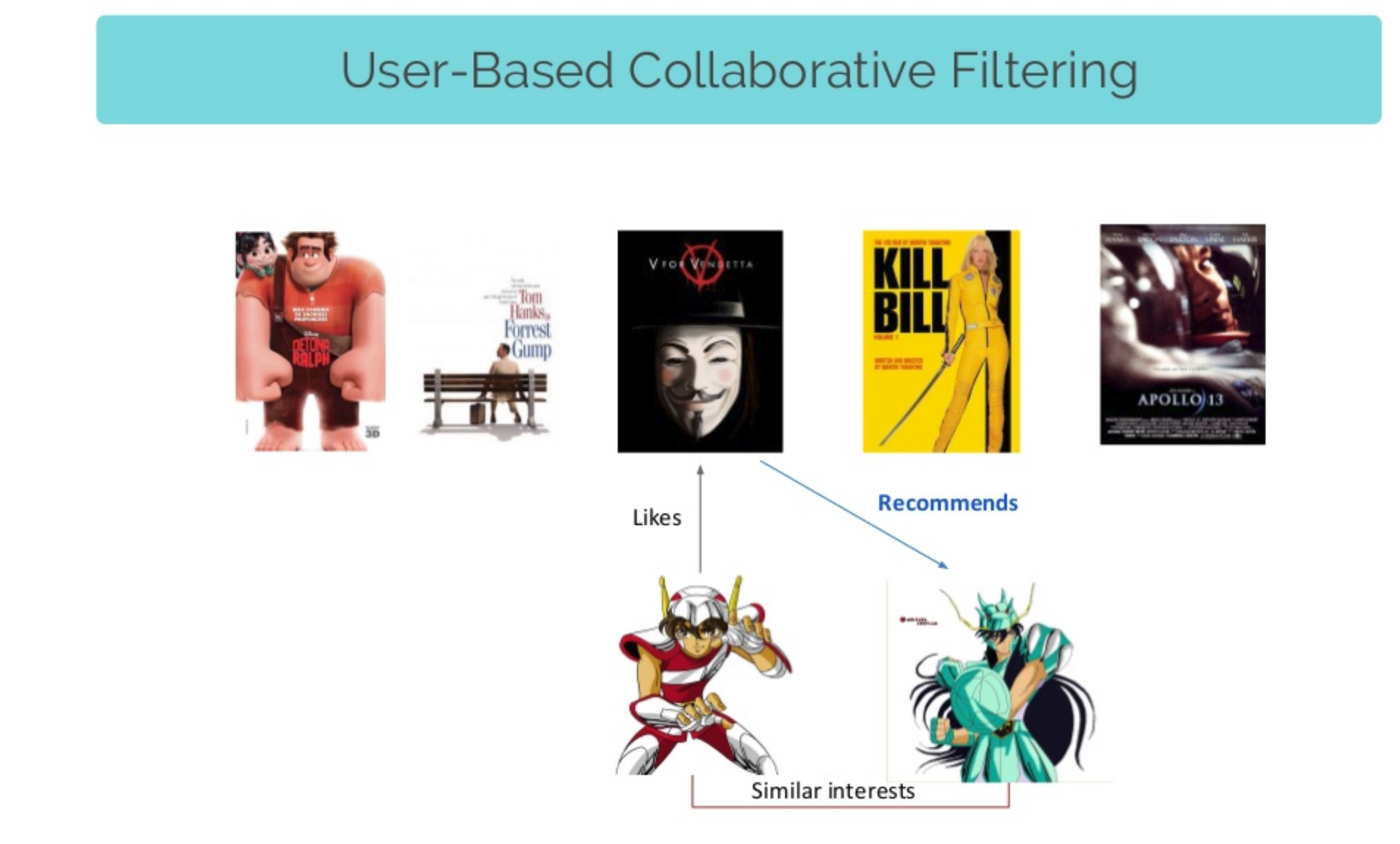

Collaborative filtering

The idea of this approach is that users fall into interest buckets.

If we are able so say that user A and user B fall in that same bucket (both may like history books), then whatever A liked, B might also like.

books.head()

| book_id | goodreads_book_id | best_book_id | work_id | books_count | isbn | isbn13 | authors | original_publication_year | original_title | ... | ratings_count | work_ratings_count | work_text_reviews_count | ratings_1 | ratings_2 | ratings_3 | ratings_4 | ratings_5 | image_url | small_image_url | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | 2767052 | 2767052 | 2792775 | 272 | 439023483 | 9.780439e+12 | Suzanne Collins | 2008.0 | The Hunger Games | ... | 4780653 | 4942365 | 155254 | 66715 | 127936 | 560092 | 1481305 | 2706317 | https://images.gr-assets.com/books/1447303603m... | https://images.gr-assets.com/books/1447303603s... |

| 1 | 2 | 3 | 3 | 4640799 | 491 | 439554934 | 9.780440e+12 | J.K. Rowling, Mary GrandPré | 1997.0 | Harry Potter and the Philosopher's Stone | ... | 4602479 | 4800065 | 75867 | 75504 | 101676 | 455024 | 1156318 | 3011543 | https://images.gr-assets.com/books/1474154022m... | https://images.gr-assets.com/books/1474154022s... |

| 2 | 3 | 41865 | 41865 | 3212258 | 226 | 316015849 | 9.780316e+12 | Stephenie Meyer | 2005.0 | Twilight | ... | 3866839 | 3916824 | 95009 | 456191 | 436802 | 793319 | 875073 | 1355439 | https://images.gr-assets.com/books/1361039443m... | https://images.gr-assets.com/books/1361039443s... |

| 3 | 4 | 2657 | 2657 | 3275794 | 487 | 61120081 | 9.780061e+12 | Harper Lee | 1960.0 | To Kill a Mockingbird | ... | 3198671 | 3340896 | 72586 | 60427 | 117415 | 446835 | 1001952 | 1714267 | https://images.gr-assets.com/books/1361975680m... | https://images.gr-assets.com/books/1361975680s... |

| 4 | 5 | 4671 | 4671 | 245494 | 1356 | 743273567 | 9.780743e+12 | F. Scott Fitzgerald | 1925.0 | The Great Gatsby | ... | 2683664 | 2773745 | 51992 | 86236 | 197621 | 606158 | 936012 | 947718 | https://images.gr-assets.com/books/1490528560m... | https://images.gr-assets.com/books/1490528560s... |

5 rows × 23 columns

Our goal here is to express each user and each book into some semantic representation derived from the ratings we have.

We will model each id (both user_id, and book_id) as a hidden latent variable sequence (also called embedding). The user_id embeddings would represent that user’s personal tastes. The book_id embeddings would represent the book characteristics.

We then assume that the rating of a user would be the product between his personal tastes (the user’s embeddings) multiplied with the books characteristics (the book embeddings).

Basically, this means we will try to model the formula

rating = user_preferences * book_charatersitcs + user_bias + book_bias

user_biasis a tendency of a user to give higher or lower scores.book_biasis a tendency of a book to be more known, publicized, talked about so rated higher because of this.

We expect that while training, the ratings will back-propagate enough information into the embeddings so as to jointly decompose both the user_preferences vectors and book_characteristics vectors.

from keras.layers import Input, Embedding, Flatten

from keras.layers import Input, InputLayer, Dense, Embedding, Flatten

from keras.layers.merge import dot, add

from keras.engine import Model

from keras.regularizers import l2

from keras.optimizers import Adam

hidden_factors = 10

user = Input(shape=(1,))

emb_u_w = Embedding(input_length=1, input_dim=len(users), output_dim=hidden_factors)

emb_u_b = Embedding(input_length=1, input_dim=len(users), output_dim=1)

book = Input(shape=(1,))

emb_b_w = Embedding(input_length=1, input_dim=len(books), output_dim=hidden_factors)

emb_b_b = Embedding(input_length=1, input_dim=len(books), output_dim=1)

merged = dot([

Flatten()(emb_u_w(user)),

Flatten()(emb_b_w(book))

], axes=-1)

merged = add([merged, Flatten()(emb_u_b(user))])

merged = add([merged, Flatten()(emb_b_b(book))])

model = Model(inputs=[user, book], outputs=merged)

model.summary()

model.compile(optimizer='adam', loss='mse')

model.optimizer.lr=0.001

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_32 (InputLayer) (None, 1) 0

__________________________________________________________________________________________________

input_33 (InputLayer) (None, 1) 0

__________________________________________________________________________________________________

embedding_62 (Embedding) (None, 1, 10) 534240 input_32[0][0]

__________________________________________________________________________________________________

embedding_64 (Embedding) (None, 1, 10) 100000 input_33[0][0]

__________________________________________________________________________________________________

flatten_60 (Flatten) (None, 10) 0 embedding_62[0][0]

__________________________________________________________________________________________________

flatten_61 (Flatten) (None, 10) 0 embedding_64[0][0]

__________________________________________________________________________________________________

embedding_63 (Embedding) (None, 1, 1) 53424 input_32[0][0]

__________________________________________________________________________________________________

dot_9 (Dot) (None, 1) 0 flatten_60[0][0]

flatten_61[0][0]

__________________________________________________________________________________________________

flatten_62 (Flatten) (None, 1) 0 embedding_63[0][0]

__________________________________________________________________________________________________

embedding_65 (Embedding) (None, 1, 1) 10000 input_33[0][0]

__________________________________________________________________________________________________

add_15 (Add) (None, 1) 0 dot_9[0][0]

flatten_62[0][0]

__________________________________________________________________________________________________

flatten_63 (Flatten) (None, 1) 0 embedding_65[0][0]

__________________________________________________________________________________________________

add_16 (Add) (None, 1) 0 add_15[0][0]

flatten_63[0][0]

==================================================================================================

Total params: 697,664

Trainable params: 697,664

Non-trainable params: 0

__________________________________________________________________________________________________

train_df.head()

| user_id | book_id | rating | |

|---|---|---|---|

| 2492982 | 12364 | 889 | 4 |

| 1374737 | 5905 | 930 | 3 |

| 4684686 | 49783 | 2398 | 3 |

| 5951422 | 27563 | 438 | 4 |

| 5588313 | 44413 | 4228 | 4 |

raw_data = train_df[['user_id', 'book_id', 'rating']].values

raw_valid = test_df[['user_id', 'book_id', 'rating']].values

u = raw_data[:,0] - 1

b = raw_data[:,1] - 1

r = raw_data[:,2]

vu = raw_data[:,0] - 1

vb = raw_data[:,1] - 1

vr = raw_data[:,2]

model.fit(x=[u, b], y=r, validation_data=([vu, vb], vr), epochs=1)

Train on 100000 samples, validate on 30000 samples

Epoch 1/1

100000/100000 [==============================] - 36s 365us/step - loss: 8.6191 - val_loss: 7.1418

After the training is done, we can retrieve the embedding values for the books, the users and the biases in order to reproduce the computations ourselves for a single user.

book_embeddings = emb_b_w.get_weights()[0]

book_embeddings.shape, "10000 books each with 10 hidden features (the embedding)"

((10000, 10), '10000 books each with 10 hidden features (the embedding)')

user_embeddings = emb_u_w.get_weights()[0]

user_embeddings.shape, "54k users each with 10 hidden preferences"

((53424, 10), '54k users each with 10 hidden preferences')

user_bias = emb_u_b.get_weights()[0]

book_bias = emb_b_b.get_weights()[0]

user_bias.shape, book_bias.shape, "every user and book has a specific bias"

((53424, 1), (10000, 1), 'every user and book has a specific bias')

Know, let’s recompute the formula

\[bookRating(b, u) = userEmbedding(u) * bookEmbedding(b) + bookBias(b) + userBias(u)\]And do this for every book, if we have a specific user set.

this_user = 220

books_ranked_for_user = (np.dot(book_embeddings, user_embeddings[this_user]) + user_bias[this_user] + book_bias.flatten())

books_ranked_for_user.shape

(10000,)

We get back 10000 ratings, one for each book, scores computed specifically for this user’s tastes.

We can now sort the ratings and get the 10 most “interesting” ones.

best_book_ids = np.argsort(books_ranked_for_user)[-10:]

best_book_ids

array([19, 17, 22, 23, 24, 16, 3, 26, 0, 1])

Which decode to…

books.loc[books.book_id.isin(best_book_ids)][['title', 'authors']]

| title | authors | |

|---|---|---|

| 0 | The Hunger Games (The Hunger Games, #1) | Suzanne Collins |

| 2 | Twilight (Twilight, #1) | Stephenie Meyer |

| 15 | The Girl with the Dragon Tattoo (Millennium, #1) | Stieg Larsson, Reg Keeland |

| 16 | Catching Fire (The Hunger Games, #2) | Suzanne Collins |

| 18 | The Fellowship of the Ring (The Lord of the Ri... | J.R.R. Tolkien |

| 21 | The Lovely Bones | Alice Sebold |

| 22 | Harry Potter and the Chamber of Secrets (Harry... | J.K. Rowling, Mary GrandPré |

| 23 | Harry Potter and the Goblet of Fire (Harry Pot... | J.K. Rowling, Mary GrandPré |

| 25 | The Da Vinci Code (Robert Langdon, #2) | Dan Brown |

Again you can imagine this being all this code tied up into a single system.

Conclusions

- Recommender systems are (needed) everywhere

- The metrics used to evaluate one are more exotic (ex. NDCG@N, MAP@N, etc..)

- We’ve shown how to implement 3 recommendation engine models:

- Popularity model

- Content based model

- Collaboration based model

- The collaboration based models have the lowest needs of data (information) among the three

Comments